Jochen Huber

Hello!

I am Professor of Computer Science at Furtwangen University. My work is situated at the intersection of Human-Computer Interaction and Assistive Technology. I design, implement and study novel input technology in the areas of mobile, tangible & non-visual interaction and assistive augmentation.

Beyond code & electronics, I am in to guitars, volleyball and cooking. I am a bike nerd, so-so photographer and love to hike and snowboard.

-

Sep '20 Joined

Furtwangen University's Faculty of Industrial Technologies.

Appointed as Professor of Computer Science. Thrilled! - Oct '19 Joined Lucerne University's mentoring programme in CS/UX.

- Feb '19 Excited to be on the AutoUI PC this year.

- Dec '18 New book on Assistive Augmentation is out!

Recent Publications

Multi-Level Force Touch Discrimination on CIDs in Cars

Huber, Sheik-Nainar and Matic

Industrial Showcase Paper. In Proceedings of AutoUI ’17.

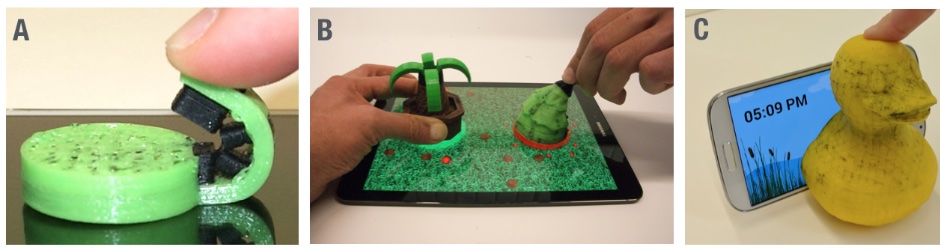

Flexibles: Deformation-Aware 3D-Printed Tangibles [...]

Schmitz, Steimle, Huber, Dezfuli and

Mühlhäuser

Full Paper. In Proceedings of CHI '17.

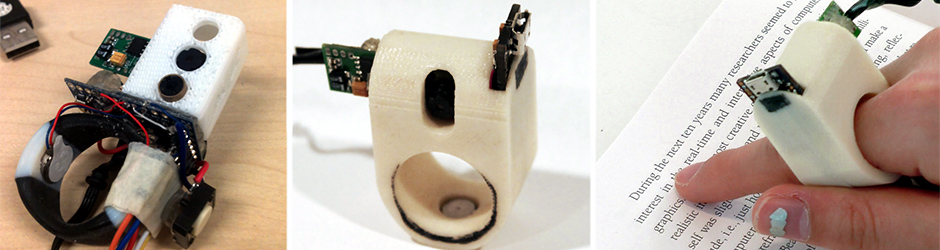

FingerReader: A Wearable Device to Explore Printed Text on the

Go

Shilkrot*, Huber*, Wong, Maes and Nanayakkara

Full Paper. In Proceedings of CHI '15.

[* equal contribution]